CSAT Survey Best Practices: 50+ Example Questions & Free Template

Table of Contents

Customer satisfaction surveys remain one of the most direct, reliable ways to understand how customers feel about your product, support, and overall experience. Yet most companies get them wrong — asking too many questions, sending them at the wrong time, or failing to act on the results.

In this guide, you'll find 50+ customer satisfaction survey questions organized by use case, free downloadable templates, and a step-by-step framework for building a customer feedback survey that delivers actionable data. Whether you're measuring post-onboarding satisfaction, evaluating support quality, or tracking long-term loyalty, this resource gives you everything you need to start collecting meaningful feedback today.What Is a Customer Satisfaction Survey?

A customer satisfaction survey (often called a CSAT survey or customer feedback survey) is a structured questionnaire designed to measure how satisfied customers are with your product, service, or a specific interaction. The results produce a quantifiable score — typically the Customer Satisfaction Score (CSAT) — that teams use to benchmark performance, identify weak points, and track improvement over time.

Customer satisfaction surveys can be as short as a single question ("How satisfied are you with our service?") or as detailed as a multi-section questionnaire covering product quality, support experience, and likelihood to recommend.

How to calculate your CSAT score:

CSAT (%) = (Number of satisfied responses ÷ Total responses) × 100

For example, if 80 out of 100 respondents rate their experience a 4 or 5 on a 5-point scale, your CSAT score is 80%. A score above 75% is generally considered good, though benchmarks vary by industry.

Organizations use customer satisfaction survey data to identify trends, prioritize improvements, validate product changes, and strengthen customer relationships. According to Bain & Company, increasing customer retention by just 5% can increase profits by 25–95% — and satisfaction surveys are one of the most effective tools for understanding what drives retention.

Why Customer Satisfaction Surveys Matter

Measuring customer satisfaction isn't about ticking a box. It's about building a customer-centric culture where feedback loops drive continuous improvement. Here's why every B2B company should run regular customer feedback surveys:

They reveal what customers actually think. A Bain & Company study found that 80% of companies believe they deliver a superior customer experience, but only 8% of customers agree. Surveys close that perception gap by giving you unfiltered, structured insight into how customers experience your product and service.

They drive product and service improvements. Customer feedback provides concrete data to guide development priorities. A PwC study found that 73% of customers cite experience as an important factor in purchasing decisions. When customers tell you exactly what's working and what isn't, you can allocate resources where they matter most.

They increase loyalty and reduce churn. Regularly asking for feedback — and acting on it — demonstrates you value the customer relationship. Salesforce research shows that 80% of customers expect companies to use their feedback to improve. Meeting that expectation builds trust, which is the foundation of long-term retention. And when you use survey data to improve your customer onboarding process, the impact compounds — satisfied onboarded customers are far less likely to churn in the first 90 days.

They enable benchmarking over time. Without a consistent measurement system, you're guessing. Customer satisfaction surveys create a baseline against which you can measure the impact of every operational change, product update, or process improvement. The American Customer Satisfaction Index (ACSI) provides industry-level benchmarks, but your internal trends matter even more.

They fuel business growth. Satisfied customers buy more, renew at higher rates, and refer others. Data-driven companies that collect and act on customer feedback are 8.5 times more likely to be profitable year-over-year, according to Forrester.

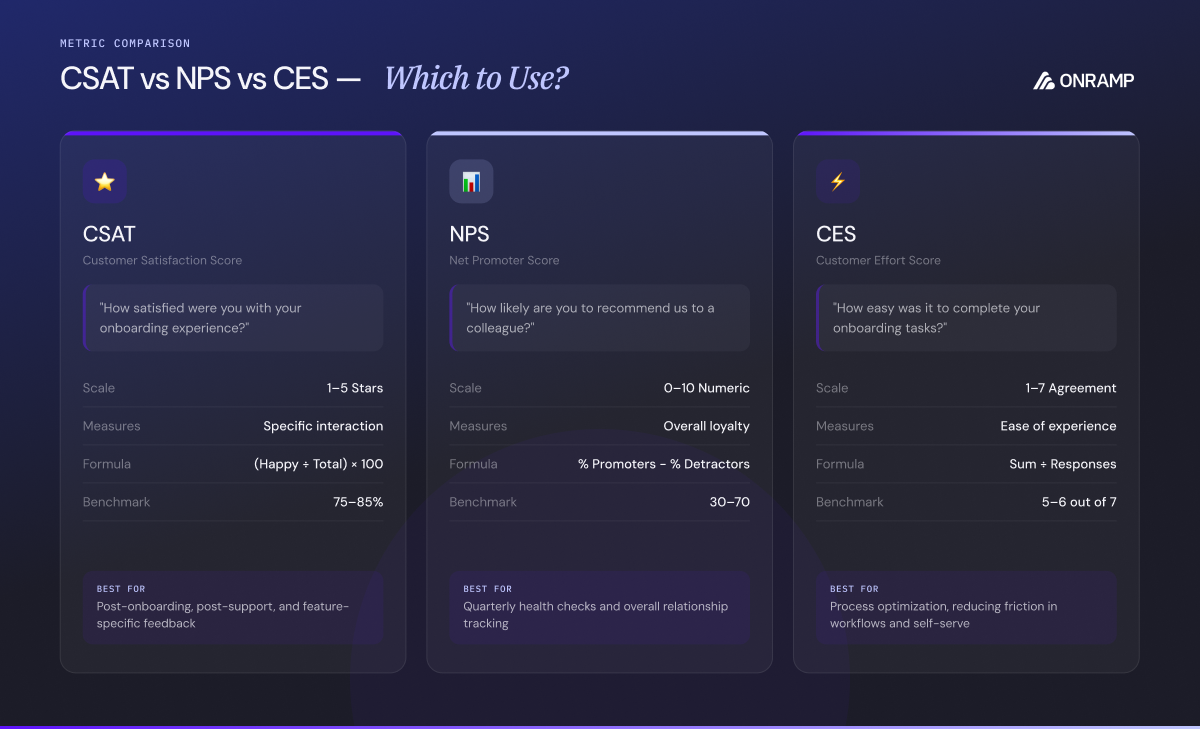

3 Core Customer Satisfaction Metrics: CSAT vs. NPS vs. CES

Before building your customer satisfaction questionnaire, decide which metrics align with your goals. Most customer feedback programs use one or more of these three:

CSAT (Customer Satisfaction Score)

CSAT measures short-term, transactional satisfaction — how a customer feels about a specific interaction, feature, or experience.

Typical question: "How satisfied are you with [product/service/interaction]?" rated on a 1–5 or 1–7 scale.

When to use it: After support tickets, post-onboarding, following product updates, or at any key touchpoint.

Benchmark: Scores above 75% are generally considered good. Above 85% is excellent.

NPS (Net Promoter Score)

NPS measures long-term loyalty and advocacy by asking how likely a customer is to recommend you.

Typical question: "On a scale of 0–10, how likely are you to recommend [company] to a friend or colleague?"

How it works: Respondents are classified as Promoters (9–10), Passives (7–8), or Detractors (0–6). Your NPS = % Promoters − % Detractors.

When to use it: Quarterly check-ins, 2–3 months before renewal, or as part of a recurring relationship health cadence. NPS is also a critical component of your customer health score.

Benchmark: An NPS above 30 is good. Above 50 is excellent.

CES (Customer Effort Score)

CES evaluates how easy it was for a customer to complete a task or resolve an issue.

Typical question: "How easy was it to [resolve your issue / complete onboarding / find what you needed]?" rated on a scale of 1 (very difficult) to 7 (very easy).

When to use it: After support interactions, post-onboarding, or after self-service workflows.

Benchmark: Higher scores are better. A CES above 5 on a 7-point scale indicates low-effort experiences.

| Metric | What It Measures | Best Used For | Scale |

|---|---|---|---|

| CSAT | Transactional satisfaction | Post-interaction feedback | 1–5 or 1–7 |

| NPS | Long-term loyalty | Relationship health checks | 0–10 |

| CES | Ease of experience | Support & onboarding evaluation | 1–7 |

50+ Customer Satisfaction Survey Questions (by Category)

Below is a comprehensive question bank for your customer satisfaction survey. Pick and choose the questions that match your goals — we recommend limiting any single survey to 5–7 questions for optimal completion rates.

Post-Onboarding Survey Questions

These questions measure how effectively your team guided new customers through implementation. They're especially valuable for B2B SaaS companies where onboarding directly impacts retention.

- How satisfied are you with the overall onboarding experience? (1–7 scale)

- Did the onboarding process meet your initial expectations?

- How clear were the instructions and next steps provided during onboarding?

- How easy was it to get set up and start using [product name]?

- How responsive was your onboarding team when you had questions?

- Did you achieve your first key milestone during onboarding? (Yes/No)

- How confident do you feel using [product name] independently?

- What, if anything, would you change about the onboarding experience? (Open-ended)

Pro tip: Send post-onboarding surveys within 24–48 hours of onboarding completion. Waiting too long lets the experience fade, and you'll get vaguer feedback. At OnRamp, we recommend building automated satisfaction checkpoints directly into your customer onboarding checklist.

Post-Support & Post-Interaction Questions

Use these after a customer contacts your support team or completes a key service interaction.

- How satisfied are you with the support you received? (1–7 scale)

- Was your issue resolved to your satisfaction? (Yes/No)

- How would you rate the knowledge of the support representative?

- How long did you wait before receiving help?

- How easy was it to reach a support representative?

- Did you feel the support team understood your issue?

- Please rate the overall quality of the support experience. (1–7 scale)

- What could we do to improve our support process? (Open-ended)

Product & Feature Satisfaction Questions

These help your product team understand how customers perceive specific features and overall product quality.

- How satisfied are you with [product name] overall? (1–7 scale)

- How well does [product name] meet your business needs?

- Which feature do you find most valuable? (Multiple choice or open-ended)

- Which feature do you find least valuable or most frustrating?

- How would you rate the reliability of [product name]? (1–7 scale)

- How satisfied are you with the frequency and quality of product updates?

- Is there a feature you wish [product name] had? (Open-ended)

- How does [product name] compare to alternatives you've used?

NPS & Loyalty Follow-Up Questions

Start with the standard NPS question, then dig deeper to understand the "why" behind the score.

- On a scale of 0–10, how likely are you to recommend [company name] to a friend or colleague?

- What is the primary reason for your score? (Open-ended)

- What would we need to do to earn a higher score?

- Have you already recommended [company name] to someone? (Yes/No)

- If we made improvements based on your feedback, would you be willing to update your rating?

- Compared to 6 months ago, has your overall satisfaction with [company name] improved, stayed the same, or declined?

Customer Effort & Usability Questions

Low-effort experiences drive loyalty. These questions identify friction points across your product and processes.

- How easy was it to complete [specific task] today? (1–7 scale)

- Did you experience any difficulties navigating [product/website/app]?

- How intuitive did you find the interface? (1–7 scale)

- Were the instructions and labels clear and easy to understand?

- Did you encounter any technical issues or errors?

- How easy is it to find the information you need in our help documentation?

- On a scale of 1–7, how much effort did you personally have to put forth to resolve your issue?

Open-Ended Customer Feedback Questions

Open-ended questions capture qualitative insights that structured questions miss. Include at least one in every customer feedback survey.

- What do you like most about working with [company name]?

- What do you like least about working with [company name]?

- If you could change one thing about our product or service, what would it be?

- Is there anything we could do to make your experience better?

- How would you describe [company name] to a colleague in one sentence?

- What nearly caused you to stop using our product? (For at-risk accounts)

Renewal & Relationship Health Questions

Send these 2–3 months before renewal to identify risks and reinforce value. These feed directly into your customer health scoring system.

- How satisfied are you with the overall value you receive for the price? (1–7 scale)

- How likely are you to renew your subscription? (Very likely / Somewhat likely / Neutral / Unlikely / Very unlikely)

- Has [product name] delivered on the outcomes you expected when you signed up?

- How satisfied are you with the level of communication from our team?

- Are there any unresolved issues that would affect your renewal decision?

- What could we do in the next 90 days to strengthen our partnership? (Open-ended)

Demographic & Segmentation Questions

Use sparingly — these help you segment results by persona, company size, or lifecycle stage.

- What is your role within your organization? (Dropdown)

- How long have you been a customer? (Dropdown)

- How frequently do you use [product name]? (Daily / Weekly / Monthly / Rarely)

- What is your company's size? (1–50 / 51–200 / 201–1000 / 1000+)

- How did you first hear about [company name]?

Free Customer Satisfaction Survey Templates

We've created ready-to-use templates so you can launch your first customer feedback survey in minutes instead of hours. Each template includes pre-written questions, recommended scales, and distribution timing guidance.

Template 1: Post-Onboarding CSAT Survey (5 Questions)

Best for: Measuring satisfaction immediately after onboarding completion.

| # | Question | Type |

|---|---|---|

| 1 | How satisfied are you with the overall onboarding experience? | 1–7 scale |

| 2 | How clear were the instructions and next steps provided? | 1–7 scale |

| 3 | How confident do you feel using [product] independently? | 1–7 scale |

| 4 | Would you recommend our onboarding process to a colleague? | 0–10 NPS |

| 5 | What could we improve about onboarding? | Open-ended |

When to send: 24–48 hours after onboarding completion.

Template 2: Post-Support Interaction Survey (4 Questions)

Best for: Evaluating support quality after a ticket is resolved.

| # | Question | Type |

|---|---|---|

| 1 | How satisfied are you with the support you received? | 1–7 scale |

| 2 | Was your issue fully resolved? | Yes / No / Partially |

| 3 | How easy was it to get the help you needed? | 1–7 CES |

| 4 | Any additional comments? | Open-ended |

When to send: Immediately after ticket resolution.

Template 3: Quarterly Relationship Health Survey (6 Questions)

Best for: Recurring check-ins with active accounts. Results can inform your customer engagement strategy.

| # | Question | Type |

|---|---|---|

| 1 | How satisfied are you with [product] overall? | 1–7 scale |

| 2 | How likely are you to recommend [company] to a colleague? | 0–10 NPS |

| 3 | What is the primary reason for your score? | Open-ended |

| 4 | How well does [product] meet your business needs? | 1–7 scale |

| 5 | How satisfied are you with our team's communication? | 1–7 scale |

| 6 | What could we do to improve your experience in the next quarter? | Open-ended |

When to send: Quarterly, or 2–3 months before renewal.

Template 4: Pre-Renewal Risk Assessment (5 Questions)

Best for: Identifying churn risk before renewal conversations begin.

| # | Question | Type |

|---|---|---|

| 1 | How likely are you to renew? | Very likely → Very unlikely |

| 2 | Are you satisfied with the value you receive for the price? | 1–7 scale |

| 3 | Has [product] delivered on the outcomes you expected? | Yes / Partially / No |

| 4 | Are there unresolved issues that affect your renewal decision? | Yes / No |

| 5 | What could we do in the next 90 days to strengthen our partnership? | Open-ended |

When to send: 60–90 days before renewal date.

📥 Download All 4 Templates as a Google Sheet → Includes pre-built scoring formulas, response tracking, and a CSAT calculation dashboard.

How to Create a Customer Satisfaction Survey in 5 Steps

Step 1: Define Your Objective

Every effective survey starts with a clear goal. Ask yourself: what specific decision will this data inform? Are you trying to improve your onboarding process? Evaluate support quality? Assess pre-renewal risk?

Your objective determines which questions to ask, when to send, and how to analyze results. If your aim is to improve onboarding, for example, your questions should focus on clarity, responsiveness, and confidence — not product pricing.

Actionable tip: Write down your primary question in a single sentence. Example: "Are customers confident using our product independently after onboarding?" Every survey question should connect back to this objective.

Step 2: Choose Your Metric and Survey Type

Based on your objective, decide whether you need CSAT, NPS, CES, or a combination. Each metric serves different purposes — refer to the comparison table above to choose the right fit.

For most use cases, we recommend a hybrid approach: one or two scaled questions (CSAT or CES), one NPS question, and one open-ended question. This gives you both quantitative trends and qualitative context in a single short survey.

Step 3: Write Clear, Unbiased Questions

Poorly worded questions produce unreliable data. Follow these principles:

Ask one thing at a time. "Did you enjoy our service and our new feature?" is a double-barreled question. If the service was great but the feature wasn't, the customer can't answer accurately. Split it into two questions.

Avoid leading language. Instead of "How amazing was our support?" use "How satisfied were you with the support you received?" Neutral phrasing produces honest responses.

Keep it concise. Aim for 5–7 questions maximum. Research consistently shows that survey completion rates drop sharply after 7 questions. Every unnecessary question reduces your response rate.

Use a consistent scale. Switching between a 1–5 scale, a 1–10 scale, and a 1–7 scale within the same survey confuses respondents. Pick one and stick with it. We recommend a 1–7 scale — it encourages more thoughtful answers than the more familiar 1–10 scale, which tends to produce inflated results.

Step 4: Design for Completion

Survey design directly impacts response rates. Here's what we've found works best after running customer feedback surveys across hundreds of B2B accounts:

Use a 1–7 scale instead of 1–10. A 1–10 scale is so familiar that respondents default to high numbers without thinking. A 1–7 scale prompts more deliberate responses.

Limit surveys to 5 questions. Long surveys lead to abandonment. You'd rather have 200 completed responses to 5 good questions than 40 partial responses to 20 mediocre ones.

Send from a generic company email. Surveys sent from surveys@yourcompany.com produce less biased results than those sent from an individual CSM's email, where personal relationships can skew scores.

Make most questions optional. Requiring answers to every question increases abandonment. Keep only your most critical 1–2 questions required and let respondents skip the rest.

Include one open-ended question. This is where the real insights live. Scaled questions tell you how much satisfaction has changed; open-ended responses tell you why.

Step 5: Test, Launch, and Iterate

Before going live, pilot your survey with 5–10 internal team members or a small customer segment. Look for confusing wording, unexpected interpretations, and any technical issues.

After launch, monitor response rates and completion rates. If your completion rate is below 60%, the survey may be too long or poorly timed. A/B test different subject lines, question orders, and send times to continuously improve performance.

When to Send Your Customer Feedback Survey

Timing is everything. Sending a survey at the wrong moment produces either irrelevant data or no data at all. Here are the highest-impact moments to deploy customer satisfaction surveys:

Post-onboarding completion. Right after a customer finishes the onboarding process is one of the most valuable feedback windows. The experience is fresh, emotions are high, and you can catch issues before they compound. Send within 24–48 hours of onboarding completion.

After support interactions. Trigger a short survey (2–3 questions) immediately after a support ticket is marked resolved. This captures the customer's experience while the details are still top of mind.

When a customer shows signs of disengagement. If product usage drops, support tickets increase, or onboarding milestones stall, a well-timed survey can surface the root cause. These signals are often visible in your customer health score before the customer says anything.

2–3 months before renewal. This is your window to identify and address concerns before the renewal conversation begins. Survey data from this timing directly informs your retention strategy and gives your team time to act.

After stakeholder changes. When a new champion or decision-maker joins the customer's team, their expectations may differ from the original buyer. A survey helps you recalibrate the relationship.

Quarterly as a relationship pulse check. Regular surveys create a longitudinal dataset that reveals satisfaction trends over time. These can also feed into your customer engagement metrics.

How to Distribute Your Survey

The best survey in the world is useless if nobody fills it out. Here's how to maximize response rates across common distribution channels:

Email surveys remain the most popular distribution method for B2B companies. Personalize the subject line, keep the email short, explain why you're asking, and set clear time expectations ("This takes about 90 seconds"). Send follow-up reminders to non-responders after 3 and 7 days.

In-app surveys capture feedback when customers are actively using your product. Trigger them after meaningful actions — completing a task, finishing onboarding, or using a new feature — not randomly. Keep in-app surveys to 1–2 questions.

Website pop-ups work best for transactional feedback. Use exit-intent triggers to avoid disrupting the user experience, and limit frequency to once per session.

Embedded in your onboarding platform. If you use a customer onboarding tool, embed satisfaction checkpoints directly into the workflow. This turns feedback collection into a natural part of the process rather than a separate outreach effort.

Interpreting and Acting on Survey Results

Collecting survey data is the easy part. Turning it into improved customer outcomes is where most companies fall short. Here's a framework for making your customer satisfaction survey data actionable:

Tie results to company OKRs. Link CSAT results to specific business objectives — customer retention targets, NPS improvement goals, or onboarding completion rates. When survey data connects to OKRs, it gets executive attention and budget.

Segment results by key criteria. Analyzing overall CSAT gives you a headline number, but segmenting by company size, customer persona, lifecycle stage, or onboarding cohort reveals where targeted improvements will have the biggest impact. For example, if enterprise accounts consistently score lower than SMBs on onboarding satisfaction, that's a signal to invest in a more hands-on onboarding experience for larger customers.

Follow up with respondents. Thank every respondent. For detractors and low scorers, reach out personally within 48 hours. A direct follow-up shows the customer their feedback matters and gives you an opportunity to resolve issues before they escalate.

Close the feedback loop publicly. When you make changes based on survey data, tell customers. Share improvements in release notes, newsletters, or direct communications. This reinforces the message that their voice drives your roadmap and builds trust for future surveys.

Track trends, not just snapshots. A single survey is a data point. Multiple surveys over time reveal patterns. Is post-onboarding CSAT trending up after you redesigned the experience? Is NPS declining in a specific segment? Trend analysis is where the strategic value lives.

Common Customer Satisfaction Survey Mistakes to Avoid

Even experienced teams make these errors. Here's what to watch for:

Asking too many questions. This is the most common mistake. Every additional question reduces your completion rate. If you can't explain why a question is on the survey and what action you'll take based on the answer, remove it.

Using double-barreled questions. "Did you enjoy our product and customer service?" asks two things at once. Customers can't respond accurately when they feel differently about each part.

Failing to act on results. If customers give you feedback and nothing changes, they'll stop responding. Worse, they'll feel ignored. Every survey should have a clear action plan attached.

Surveying too often. Survey fatigue is real. Limit surveys to the key moments described above and avoid sending multiple surveys within the same 30-day period.

Not including an open-ended option. Scaled questions quantify satisfaction but don't explain it. Without at least one open-ended question, you'll know your score is dropping but not why.

Ignoring non-response bias. Customers who don't respond to surveys may have the most extreme opinions — either very positive (too busy to bother) or very negative (already disengaged). Don't assume your respondent pool represents all customers.

Frequently Asked Questions

What is a good customer satisfaction survey response rate?

For B2B companies, a response rate between 20–40% is typical for email-based surveys. In-app surveys often achieve higher rates of 40–60% because they reach customers during active product usage. If your response rate falls below 15%, revisit your survey length, timing, and subject line.

How many questions should a customer satisfaction survey have?

We recommend 5–7 questions for most surveys. Research shows completion rates drop significantly after 7 questions. For post-interaction feedback, even 2–3 questions can produce useful data. The key is to ask only questions whose answers will inform a specific action.

What is the difference between a CSAT survey and an NPS survey?

A CSAT survey measures satisfaction with a specific interaction or experience, usually on a 1–5 or 1–7 scale. NPS measures overall loyalty and willingness to recommend your company, on a 0–10 scale. CSAT is best for transactional feedback; NPS is best for long-term relationship health. Many companies use both at different touchpoints.

How often should I send customer satisfaction surveys?

It depends on the trigger. Post-interaction surveys (support, onboarding) should be sent immediately after the event. Relationship health surveys work well on a quarterly cadence. Pre-renewal surveys should go out 60–90 days before the renewal date. Avoid sending more than one survey type to the same customer within a 30-day window.

Can customer satisfaction surveys actually reduce churn?

Yes — when acted on. Surveys themselves don't reduce churn, but the feedback they generate does. By identifying dissatisfied customers early and addressing their concerns, companies can intervene before disengagement turns into cancellation. This is especially powerful when CSAT data is combined with customer health scores and onboarding metrics to create a comprehensive risk-detection system.

See it in Action

A well-designed customer satisfaction survey is one of the highest-ROI activities a customer success team can invest in. It gives you a direct line to how customers feel, where friction exists, and what to fix next. The key is to keep surveys short, send them at the right moments, and — most importantly — act on the feedback you receive.

Use the 50+ questions and free templates in this guide to build your next survey. Start simple with one of the templates above, then iterate based on response rates and the quality of insights you collect.

If customer onboarding is a key driver of satisfaction for your business (and in B2B SaaS, it almost always is), consider embedding satisfaction checkpoints directly into your onboarding workflow. OnRamp makes it easy to build automated feedback loops into every stage of the customer journey — so you catch issues early and keep customers on the path to success. Get a Demo →

Melissa Scatena is the Marketing Operations Lead at OnRamp with deep experience across customer success, onboarding, and revenue operations. She leads customer events and regularly travels across the country working alongside customer success leaders, bringing real-world insights into how high-performing teams scale post-sale growth.

Related Posts:

6 Steps to Create an Amazing Customer Onboarding Experience in 2026

Introducing customers to your software or service and guiding them through a personalized onboarding journey improves satisfaction...

Everything You Need to Know About B2B SaaS Onboarding Software

The global SaaS market is booming, with experts predicting it will reach a staggering $1.016 trillion by 2032. However, one critical...

2026 Customer Onboarding Checklist: Step-by-Step Guide for Success

Onboarding is critical for customer satisfaction and retention in the B2B space, with 50% of businesses more likely to stay loyal to...

.png)